Picture this: You’ve built an amazing website. Your content is gold. But somehow, Google isn’t crawling the pages you want, and AI chatbots are completely ignoring you. Sound familiar? Let me introduce you to two tiny files that could change everything – or waste your time completely.

What Is Robots.txt? (The OG Boss of Website Access)

Okay, let’s start simple.

Robots.txt is like the bouncer at your website’s door. It tells search engines and bots which parts of your website they can visit and which parts are off-limits.

Think of your website as a house. Robots.txt is you standing at the door saying, “Hey Google, you can check out the living room and kitchen, but stay out of my bedroom and closet.”

Here’s what robots.txt actually does:

It controls which pages search engines can crawl (visit and read). It helps you manage your crawl budget (how much time Google spends on your site). It blocks duplicate or low-quality pages from being indexed. It keeps private or under-construction pages hidden from search results.

Every website should have a robots.txt file. It’s been around since 1994 – yes, it’s older than Google itself!

Where does it live?

Always at the root of your domain: yourwebsite.com/robots.txt

Go ahead, try it right now. Type any website URL followed by /robots.txt and see what shows up. Try google.com/robots.txt – even Google has one!

What Is LLMs.txt? (The New Kid Everyone’s Talking About)

Now here’s where things get interesting – and controversial.

LLMs.txt is a brand new proposal designed specifically for AI chatbots like ChatGPT, Claude, Gemini, and Perplexity.

LLM stands for “Large Language Model” – basically, the technology behind AI chatbots.

The idea is simple: create a special file that tells AI platforms the best way to understand and summarize your website content. Think of it as giving AI a “cheat sheet” about your website.

The concept sounds great, right?

You create a curated version of your content. AI reads this instead of crawling your entire site. Your content gets represented accurately in AI search results. You increase visibility in ChatGPT, Perplexity, and other AI platforms.

But here’s the plot twist that nobody’s telling you…

No AI platform actually uses it. Not a single one.

Not ChatGPT. Not Claude. Not Gemini. Not Perplexity. None of them.

Let me repeat that because it’s important: LLMs.txt is just a proposal. It’s not an official standard. No major AI company has agreed to use it.

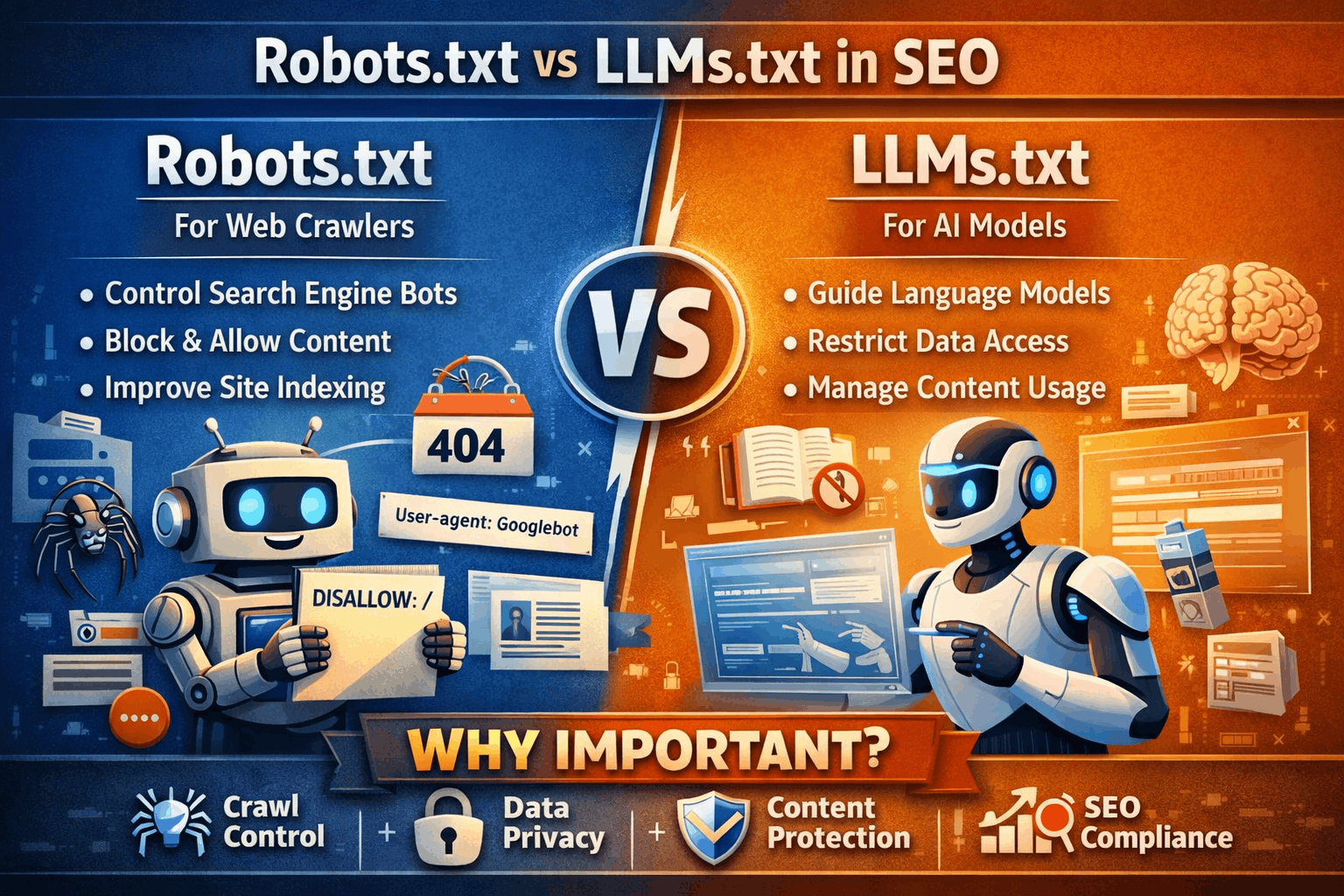

The Big Difference: Robots.txt vs LLMs.txt

Let me break this down in the simplest way possible:

Robots.txt:

- Been around since 1994

- Used by Google, Bing, and all major search engines

- Actually works and has proven results

- Controls what search engines can and cannot access

- Official standard followed by everyone

- Essential for SEO

LLMs.txt:

- Created in 2024

- NOT used by any AI platform currently

- Unproven and speculative

- Meant to provide curated content for AI

- Not an official standard

- Optional and mostly unnecessary right now

It’s like comparing a passport (robots.txt) to a proposed future digital ID (llms.txt) that no country has agreed to accept yet.

How Robots.txt Actually Works (With Real Examples)

Let me show you how to use robots.txt the right way.

Basic Structure:

User-agent: *

Disallow: /admin/

Disallow: /login/

Allow: /blog/Let me translate that for you:

User-agent: * = “Hey, this applies to all bots” Disallow: /admin/ = “Don’t go into my admin section” Allow: /blog/ = “But you can definitely check out my blog”

Real-World Example 1: Blocking Private Pages

User-agent: *

Disallow: /private/

Disallow: /draft/

Disallow: /testing/This tells search engines: “These sections are not ready for public viewing. Stay away.”

Real-World Example 2: Allowing Specific Bots Only

User-agent: Googlebot

Allow: /

User-agent: *

Disallow: /Translation: “Google, you can access everything. Everyone else, stay out.”

Real-World Example 3: Controlling Crawl of Specific File Types

User-agent: *

Disallow: /*.pdf$

Disallow: /*.doc$This blocks bots from crawling PDF and DOC files on your site.

Important Note: Robots.txt doesn’t hide pages from Google completely. It just tells Google not to crawl them. If other websites link to a blocked page, it might still appear in search results (without a description).

Want to completely hide a page? Use a meta robots tag or password protection instead.

How LLMs.txt Is Supposed to Work (But Doesn’t… Yet)

The theory behind LLMs.txt sounds smart on paper.

The Proposal:

You create a file at yourwebsite.com/llms.txt that provides:

- A summary of what your website is about

- Links to your most important pages

- Markdown files (.md) with curated versions of your content

- Instructions for how AI should interpret your information

Example of what LLMs.txt might look like:

# My Website Name

> Brief description of what we do

## Key Pages

- [About Us](/about.md)

- [Services](/services.md)

- [Blog](/blog.md)The idea is: Instead of AI crawling your entire website and potentially misunderstanding your content, it reads this simplified version and gets accurate information.

Sounds perfect, right?

The Uncomfortable Truth About LLMs.txt (That SEO Tools Won’t Tell You)

Here’s where I need to be brutally honest with you.

Major SEO tools and plugins are adding LLMs.txt features. Semrush audits flag missing llms.txt files as “issues.” WordPress SEO plugins offer llms.txt generators. Marketing platforms promote it as essential for “AI visibility.”

But here’s what Google’s John Mueller said on Reddit:

“Especially in SEO, it’s important to catch misleading & bad information early, before you invest time into doing something unnecessary. Question everything.”

He was responding to someone confused about why their SEO tool said they needed llms.txt.

Let me explain what’s happening:

There’s a massive misinformation loop. SEO tool companies add llms.txt features because users ask for them. Users ask for them because they see other companies offering them. Other companies offer them because SEO tools are flagging it. And around and around it goes.

Nobody wants to miss the “next big thing” in SEO, so everyone’s jumping on the bandwagon – even though the train hasn’t left the station yet.

Why AI Platforms Aren’t Using LLMs.txt (And Probably Won’t)

Let me share something that makes total sense once you think about it.

The Trust Problem:

LLMs.txt is inherently untrustworthy. Here’s why.

When Google crawls your website, it sees the same content your users see. That’s trustworthy.

But with LLMs.txt, you’re creating a separate version of your content specifically for AI. See the problem?

What’s stopping someone from:

- Adding keywords that aren’t actually on their site?

- Creating misleading summaries to rank better in AI results?

- Hiding spam or manipulated content in markdown files?

- Gaming the system by telling AI lies about their website?

Research proves this is a real concern. A 2024 study called “Adversarial Search Engine Optimization for Large Language Models” showed that attackers could trick AI platforms into promoting their content using hidden prompts and manipulated text.

The researchers found that they could make a camera 2.5 times more likely to be recommended by AI just by adding hidden persuasion text.

AI platforms know this risk.

Why would ChatGPT or Perplexity trust a file that you created specifically to influence them? It’s safer to just read your actual website content – the same content your human visitors see.

That’s probably why no major AI platform has adopted llms.txt.

What SEO Plugins Are Saying (The Good, The Bad, and The Honest)

Different SEO companies are taking different approaches to llms.txt. Let me show you who’s being honest and who’s… well, let’s just say “optimistic.”

The Honest Approach – Squirrly SEO:

Squirrly added llms.txt to their plugin, but they were upfront about it:

“I know that many of you love using Squirrly SEO and want to keep using it. Which is why you’ve asked us to bring this feature. So we brought it. But, because I care about you: know that LLMs txt will not help you magically appear in AI search. There is currently zero proof that it helps with being promoted by AI search engines.”

Translation: “You wanted it, here it is, but don’t expect miracles.”

I respect this honesty.

The Overpromising Approach – Rank Math:

Rank Math takes a different stance:

“So when an AI chatbot tries to summarize or answer questions based on your site, it doesn’t guess—it refers to the curated version you’ve given it. This increases your chances of being cited properly, represented accurately, and discovered by users in AI-powered results.”

The problem? This simply isn’t true. AI chatbots don’t refer to llms.txt files because they don’t use them at all.

The Middle Ground – Yoast SEO:

Yoast hedges their bets with careful language: “can” and “could” instead of “will” and “does.”

This is fair – they’re not making false promises, but they’re also not being as blunt as Squirrly.

Should You Use Robots.txt? (Spoiler: Yes, Absolutely)

Short answer: Yes. Every website needs robots.txt.

Let me tell you when and why.

You absolutely need robots.txt if:

- You have admin or login pages

- You have duplicate content (like printer-friendly versions)

- You want to manage how Google crawls your site

- You have pages under construction

- You have search result pages (like /search?q=something)

- You have thank-you pages after form submissions

How to create a robots.txt file:

Method 1: Manual Creation

Open a text editor (Notepad on Windows, TextEdit on Mac). Type your rules. Save as “robots.txt” (not robots.txt.txt). Upload to your website’s root directory.

Method 2: Using WordPress Plugins

Yoast SEO, Rank Math, or All in One SEO Pack all let you edit robots.txt directly from your dashboard.

Method 3: Using Your Hosting Provider

Many hosts like cPanel or Plesk have robots.txt editors built-in.

Pro Tips for Robots.txt:

Always allow Googlebot to access CSS and JavaScript files. Don’t use robots.txt to hide sensitive information (use password protection instead). Test your robots.txt using Google Search Console’s robots.txt Tester. Keep it simple – complicated rules can backfire.

Should You Use LLMs.txt? (Spoiler: Not Really… Yet)

Short answer: Probably not worth your time right now.

Here’s my honest take:

If you’re a major brand with lots of resources and want to experiment – sure, go ahead. Create an llms.txt file. It might help someday. Maybe one AI platform will start using it.

But if you’re a small business, startup, or solo entrepreneur? Don’t waste time on it.

Focus on things that actually work today:

- High-quality content

- Proper on-page SEO

- Good website structure

- Fast loading speeds

- Mobile optimization

- Real backlinks

These things help you rank in Google today AND prepare you for AI search tomorrow.

When might llms.txt become useful?

If and when major AI platforms like OpenAI or Google announce they’re supporting it. When you see official documentation from ChatGPT or Perplexity about how to use it. When there’s actual evidence that it improves AI visibility.

Until then? It’s speculation.

The Real Way to Show Up in AI Search Results (What Actually Works)

Want to know how to really get cited by ChatGPT, Perplexity, and other AI platforms?

Here’s what actually works:

Create Comprehensive Content

AI platforms love content that thoroughly answers questions. Not 500-word fluff pieces. Real, detailed, helpful content.

Use Clear Structure

Headers, bullet points, clear explanations. Make your content easy for AI to understand and extract.

Build Authority

Get mentioned on reputable websites. When AI sees you cited on trustworthy sources, you become a trustworthy source.

Answer Specific Questions

AI loves content that directly answers what people ask. Use question-based headings.

Keep Content Updated

Fresh content gets prioritized. Update your articles regularly.

Make Content Accessible

Don’t hide your best content behind paywalls or logins. AI can’t crawl what it can’t see.

Focus on E-E-A-T:

- Experience: Show you’ve actually done what you’re talking about

- Expertise: Demonstrate knowledge

- Authoritativeness: Get recognized by others

- Trustworthiness: Be accurate and honest

These principles work for Google AND AI search.

Common Mistakes People Make with Robots.txt

Let me save you from these painful errors:

Mistake 1: Blocking Important Pages

Disallow: /blog/Congratulations, you just told Google to ignore your entire blog. Don’t laugh – this happens more than you think.

Mistake 2: Using Robots.txt to Hide Duplicate Content

Robots.txt stops crawling, not indexing. Google might still show blocked pages in search results if they’re linked from other sites.

Better solution: Use canonical tags or 301 redirects.

Mistake 3: Blocking CSS and JavaScript

Disallow: /*.css$

Disallow: /*.js$This breaks how Google renders your pages. Google specifically says: Don’t do this.

Mistake 4: Forgetting the Trailing Slash

Disallow: /admin blocks /admin AND /administration AND /administrator Disallow: /admin/ blocks only /admin/

Subtle difference, huge impact.

Mistake 5: Not Testing

Always test your robots.txt in Google Search Console. One typo can block your entire site.

How to Check If Your Robots.txt Is Working

Quick Test:

Go to yourwebsite.com/robots.txt in your browser. If you see your rules, it’s working at the basic level.

Proper Test Using Google Search Console:

Log into Google Search Console. Go to the old version (temporary URL tester). Find “robots.txt Tester” under “Crawl”. Enter a URL from your site. Click “Test”. Google shows if the URL is allowed or blocked.

Pro Tip: Test both URLs you want blocked AND URLs you want allowed. Make sure both work as expected.

The Future: What’s Coming in 2026 and Beyond

Let’s talk about where all this is heading.

For Robots.txt:

It’s not going anywhere. It’s fundamental to how the web works. New directives might be added (like they did with “Crawl-delay” and “Noindex”). But the core concept stays the same.

For LLMs.txt:

This is where things get interesting.

Scenario 1: It Gets Adopted

Maybe OpenAI, Google, or Anthropic announces support. Then it becomes the new standard. Everyone scrambles to implement it. SEO changes forever.

Scenario 2: It Dies Quietly

Like many proposed standards before it, llms.txt fades away. AI platforms continue using their own methods. We move on to the next trend.

Scenario 3: Something Better Emerges

A new, more trustworthy standard gets developed. Major AI companies actually participate in creating it. That becomes the standard instead.

My prediction?

If llms.txt does get adopted, it’ll be in a modified form with trust mechanisms built in. Think digital signatures, verification systems, or blockchain-style validation.

Right now? I wouldn’t hold my breath.

Robots.txt Best Practices for 2026

Let me give you a template that works for most websites:

User-agent: *

Disallow: /wp-admin/

Disallow: /wp-login.php

Disallow: /cart/

Disallow: /checkout/

Disallow: /my-account/

Disallow: /thank-you/

Allow: /wp-admin/admin-ajax.php

Sitemap: https://yourwebsite.com/sitemap.xmlWhat this does:

- Blocks admin areas

- Blocks e-commerce pages that shouldn’t be indexed

- Allows AJAX functionality (important for WordPress)

- Points to your sitemap (helps Google find all your pages)

Additional Lines for Specific Needs:

For duplicate content:

Disallow: /*?

Disallow: /*&For specific bots:

User-agent: GPTBot

Disallow: /(Yes, OpenAI has a bot called GPTBot that you can block from training on your content!)

Real Talk: What Every Website Owner Needs to Know

Let me be super direct here.

If you’re running a website in 2026:

Focus 95% of your energy on things that actually move the needle right now. Quality content. Good user experience. Fast loading times. Mobile optimization. Proper SEO basics.

Spend maybe 5% experimenting with new things like llms.txt if you have the time and resources.

Don’t let shiny new objects distract you from fundamentals.

If you’re working with an SEO freelancer in Bangalore (or anywhere else), ask them this simple question:

“Is this going to help me rank better TODAY, or is this speculation about the future?”

Good SEO professionals will tell you the truth. They won’t waste your time on unproven tactics just because everyone’s talking about them.

They’ll focus on:

- Technical SEO that works now

- Content strategies with proven ROI

- Link building that’s sustainable

- Analytics that show real results

- Strategies that adapt to both current search engines and emerging AI platforms

That’s the difference between trendy SEO and effective SEO.

Your Action Plan: What to Do Right Now

Okay, let’s make this practical.

Today:

- Check if you have a robots.txt file (

yourwebsite.com/robots.txt) - If you don’t have one, create a basic one

- Test it in Google Search Console

This Week:

- Review your robots.txt rules

- Make sure you’re not accidentally blocking important pages

- Add your sitemap location to robots.txt

This Month:

- Focus on creating quality content

- Improve your site speed

- Optimize for mobile

- Build real backlinks

About llms.txt:

- Don’t stress about it

- Monitor news from major AI platforms

- If they announce support, you’ll have time to implement it

- Until then, focus on what works

The Bottom Line: Which One Should You Use?

Robots.txt? Absolutely yes. It’s essential, proven, and used by every search engine that matters.

LLMs.txt? Not necessary right now. It’s an interesting idea, but no AI platform uses it. Don’t let SEO tools scare you into thinking you need it.

The real secret to showing up in both Google and AI search?

Create genuinely helpful content. Structure it well. Make it accessible. Build authority. Stay updated.

That worked in 2000. It works in 2026. It’ll work in 2030.

Everything else is just tactics. Good content is the strategy.

Final Thoughts from an SEO Freelancer’s Perspective

As someone who’s watched SEO evolve over the years, I’ve seen countless “game-changers” come and go.

Remember when everyone said meta keywords were essential? They’re useless now.

Remember when exact match domains guaranteed rankings? Google killed that.

Remember when more backlinks always meant better rankings? Quality now beats quantity.

The lesson? Don’t chase every trend. Focus on fundamentals.

Robots.txt is a fundamental. LLMs.txt is a trend (that might become fundamental someday).

Know the difference.

If you’re looking to work with an SEO freelancer in Bangalore who focuses on proven strategies over hype, find someone who can explain not just what to do, but why it works and why it matters to your specific business goals.

The best SEO professionals don’t just follow trends – they help you understand which trends actually matter for your business and which ones are just noise.

That’s how you build sustainable growth online, whether search engines are powered by algorithms or AI.